3. Off-Nadir Angle/Elevation Angle

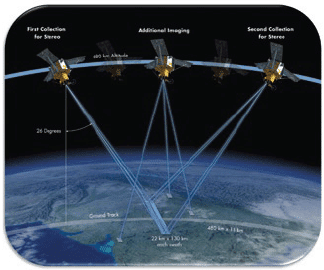

In practice, collecting an image at nadir, i.e., looking straight down at the target, doesn’t happen with high-resolution satellite imagery; satellite sensors always shoot at an angle. This agility improves imaging revisit times and, with some satellites, enables stereo collects for 3-D elevation modeling.

Satellite operators may report this either as “elevation angle,” where 90 degrees is looking straight down, or “off-nadir angle,” where 0 degrees would be looking straight down. A typical minimum is an elevation angle of 60 degrees, which is a 30-degree off-nadir angle. A high-elevation angle (lower off-nadir angle) often is desirable, especially in areas of high relief or tall buildings to minimize what’s known as the building-lean effect.

However, the desire for a higher elevation angle must be weighed against the resulting decreased imaging revisit time. A 70-degree or higher elevation angle (20 degree or less off-nadir angle) decreases the number of potential attempts the satellite can make in a given time period, making a successful new collect less likely.

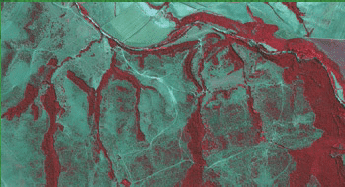

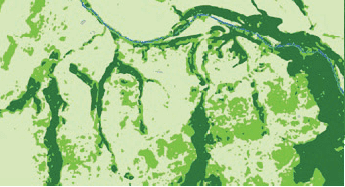

Project Example: Classification Maps

Classification maps are one of the most common types of products created from satellite imagery and can map broad categories of land cover such as forest vs. agriculture or specific plant species. They also can map land use such as urban, industrial, residential, suburban and open space. These types of “clutter” maps are used in radio-frequency modeling for wireless networks, among other applications. Traditionally, classification work has been spectral pixel-based, so 11-bit, four-band imagery or better is used; newer object-based image analysis methodologies can yield superior results, especially with high-resolution imagery and image datasets that may only be available as threeband and/or 8-bit.

4. Sun Elevation

Sun elevation is the angle of the sun above the horizon. Imagery collected with low sun elevation angles may contain data that are too dark to be usable. A typical minimum sun elevation angle is 30 degrees, but adhering to this requirement means that northern latitudes above 35 degrees will have black-out periods during the winter months when imagery with an acceptable sun elevation angle can’t be collected.

Decreasing the minimum required sun elevation angle to 15 degrees means that only northern latitudes above 50 degrees will have a black-out period; even a 30-degree sun angle is low for many applications. For example, increased shadow areas are problematic for classification and stereo projects. This will be more pronounced in high-relief areas and areas with taller objects, such as trees and buildings, where low sun elevation angles mean longer shadows will be cast.

For some of the affected land masses, these black-out periods correspond to months with snow cover, making new collects during these times less desirable regardless. In areas such as Alaska, where sun angle and snow cover limit the window for optimal imaging, optical satellites currently are unable to meet the high demand for imagery.